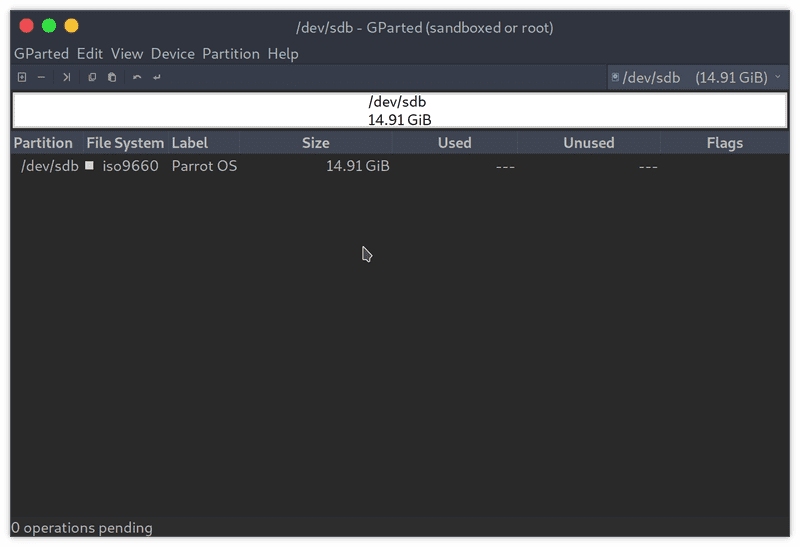

Zeroing the first 4MB with dd tends to take care of those though. LVM, LUKS, and a bunch of other things put headers with magic sequences at the beginning too, that's why we often call them "headers" Mdadm has a -z option that wipes superblock signatures. Metadata version 1.2 puts its superblock at the beginning, followed by the data space. You may also find the backup copy of GPT at the end of device, which sometimes gets in the way. Metadata version 0.9 puts its superblock at the end of the underlying device, which means that in case of raid 1 (mirror) you can access the data on a single device before assembling RAID - a nice trick to know when you want to boot from raid. I think I had a "device or resource busy" message when I tried to use wipefs to do the job, but I cannot reproduce the problem right now. Sector size (logical/physical): 512 bytes / 4096 bytes The operation merely adds the device to the filesystem structures and creates some block. The operation is instant and does not affect existing data. Alternatively the filesystem can be wiped from the device using eg. Sda3 0x1fff0000 linux_raid_member d27fc13c-fe90-62e2-cb20-1669f728008a A device with existing filesystem detected by blkid (8) will prevent device addition and has to be forced. :max_bytes(150000):strip_icc()/Screenshotfrom2018-09-0718-08-50-5b9c3427c9e77c0057d701ee.png)

You need to stop md array where sda3 is part of this You need to install sys-fs/mdadm to manage Raid array(s)

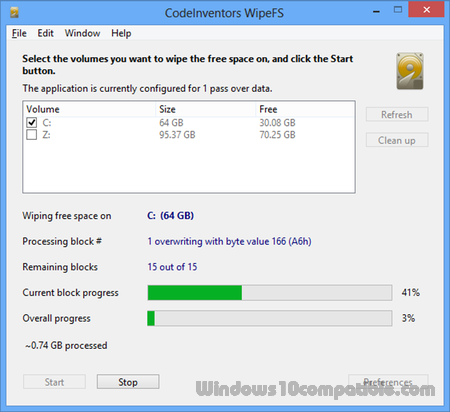

RAID member was started at boot 'cause RAID was build-in (or as module) in your kernel. Strings) from the specified device to make the signatures invisible for Wipefs can erase filesystem, raid or partition-table signatures (magic Have you tried using wipefs instead of using dd and guessing how much to wipe? According to its man page: Code: What is the "correct" amount for "count" in this case (to properly clear the Linux RAID information)?ĭepends on the RAID version, Assuming it's an mdadm RAID, while v0.9 put the metadata at the start of the partition, at least one of the versions put it at the end. When I clear out a hard disk to reinstall Gentoo from scratch I usually run:ĭd if=/dev/zero of=$ bs=1M status=progress Posted: Mon 7:10 am Post subject: wipe out part containing a linux_raid_member file system Gentoo Forums Forum Index Installing Gentoo Wipe out part containing a linux_raid_member file system Wipefs at best only erases some magic bytes on the device, it does not solve any other problem for you, if it's still mounted you have to umount it yourself (or reboot and hope it won't be able to mount then), if it's still in use you'll have to find out what is still using it and why and then decide how to stop that.Gentoo Forums :: View topic - wipe out part containing a linux_raid_member file system So you really should umount the device first (or otherwise make sure it's not in use anymore) before doing anything with wipefs, pvcreate, mkfs and the like. If the device is still in use, that's a serious issue as whatever is using the device might modify data on the device. As an aside, the dd command is also useful for writing disk images to the. This will be much faster than wiping the full SD card. The count parameter will only copy zeroes to that number of sectors and then will quit. Wipefs would be telling you the same thing, if you didn't use -f -f, -forceįorce erasure, even if the filesystem is mounted. To only wipe the beginning part of the card, add a parameter to the dd command: dd bs512 count100000 if/dev/zero of/dev/sdb. Pvcreate is telling you that the device /dev/sda1 is still in use (this can be anything, for example it could still be mounted, or part of a RAID array, or device-mapped, or looped, or any running process like if you're in the middle of dd copying the device.). There seems to be a misunderstanding of some sort.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed